This feature is available as part of the Honeycomb Enterprise and Pro plans.

Define Your SLO

When creating an SLO, you must define a service level indicator (SLI) that your SLO will use to evaluate your level of success. In the process of determining a suitable SLI, you should identify qualified events, which are events that contain information about the SLI. Keeping these qualified events in mind, ask yourself: “Over what period of time do I expect what percentage of qualified events to pass my SLO?” For example, “I expect that 99% of qualified events will succeed over every 30 days”. In this example,99% is the target percentage of success and 30 days is the time period being measured.

Your SLO will use your SLI alongside your identified target percentage and time period to evaluate status.

As you select a level, base it off your current state, which you can find out by doing a count query grouped by your SLI calculated field after it has been created.

Create Your SLO

To create an SLO:- From the left sidebar, select SLOs.

- Select New SLO.

-

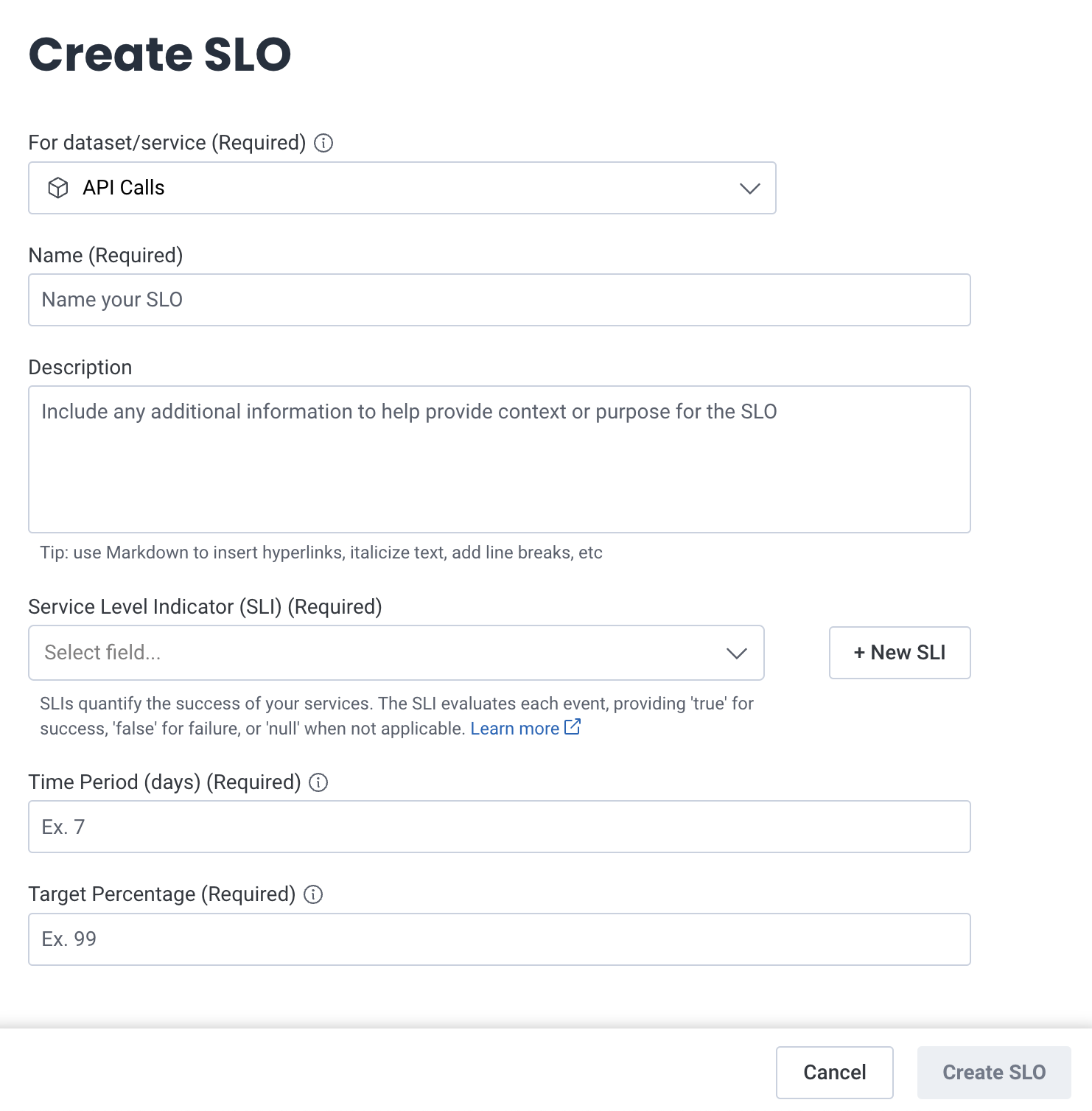

Enter details for your SLO:

Field Description Dataset/service Dataset(s) or service(s) to which you want your SLO to apply. You can select up to 10. If your SLO applies to only one dataset or service and you have already created an SLI as a Calculated Field, you must create your SLO inside the same dataset that contains that Calculated Field. If your SLO applies to multiple datasets(s) or service(s), your SLI must be created as a shared Calculated Field. Name Name of your SLO. Description Information that can help provide context or purpose for the SLO. You may use Markdown to insert links and format text. Tags Labels that organize and group related SLOs, making it easier to filter by tag and find them. Enter tags in key:value format (for example, area:pipelinesorteam:prism). Use Assign to add up to 10 tags per SLO. Tag keys can contain letters only, up to 32 characters. Tag values can include alphanumeric characters and the special characters/and-, with a maximum length of 128 characters.Service Level Indicator (SLI) SLI, expressed as a Calculated Field, that your SLO will use to evaluate your level of success. If you have already defined your SLI in a Calculated Field, select it from the dropdown. Otherwise, select + New SLI and create your SLI. If you create a new SLI from within this SLO, it will be created at the environment level, so you may use it with multiple services or datasets if so desired. Time Period Time period (in days) to which this SLO applies. Target Percentage Target percentage of events you expect to succeed.

- Select Create SLO.

Create Your SLO from a Template

You can create an SLO from a template. Use SLO templates to create a pre-configured SLO based on your target datasets. If no previous SLOs exist, the SLO displays the available SLO Templates and an option to create an SLO from scratch. Otherwise, the Templates section in the List view displays SLO Templates available to you. To create an SLO from a Template:- Navigate to SLOs using the left navigation menu.

- Select from the available Template options. A creation modal appears.

- Select the text box to view a list of available datasets.

- Select up to 24 datasets to apply this SLO.

- Select Next to finish. An SLO Detailed View of the created SLO appears.

Available SLO Templates

| Template | SLO Name | SLO Description |

|---|---|---|

| Service Uptime | Uptime: At Least 90.0% of HTTP Requests Succeed | This SLO ensures that at least 90.0% of HTTP requests return successful responses (status codes below 400), minimizing downtime and improving user experience. This SLO was auto-generated via a template. |

| Service Responsiveness | Responsiveness: At Least 95% of HTTP Requests are Fast | This SLO ensures that 95% of HTTP requests complete within 1000 milliseconds, maintaining fast and reliable responses for users. This SLO was auto-generated via a template. |

Next Steps

Now that you have an SLO, you can:- Monitor the SLOs for your team

- Set up Burn Alerts, which provide notifications related to your SLO budget

- Monitor sets of related SLOs alongside your queries by adding SLOs to a Board