A self-service migration from Classic Datasets to Environments is available to all teams. When migrating, data being sent to Honeycomb will change its destination from the dataset(s) in Classic to the new Environment(s). For Enterprise teams, migration assistance exists. Contact your Honeycomb Success Representative for more details.Documentation Index

Fetch the complete documentation index at: https://docs.honeycomb.io/llms.txt

Use this file to discover all available pages before exploring further.

Self-Service Migration Steps

Requirements

Before proceeding, complete the Migration Preparation checklist, and ensure an easier migration experience.Overview

The migration process includes the following tasks:- Create a New Environment

- Update instrumentation to Environment and Services-compatible versions

- Migrate Refinery (if applicable)

- Send your Honeycomb data to an Environment

- Recreate your Honeycomb Feature Configurations in your Environment

Create A New Environment

First, create a new Environment in Honeycomb. You must be a team owner in order to create an Environment. A new Environment creates a new API Key by default. After creating a new Environment, additional API Keys may be created according to best practices. In a later step, use this API Key to update your instrumentation and to tell Honeycomb to send the data to this new Environment. To create a new Environment in Honeycomb:-

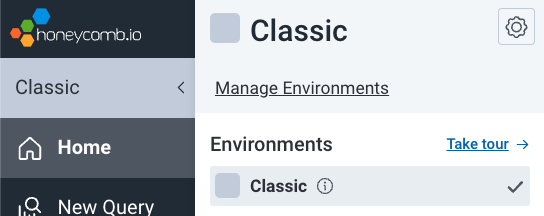

Select the label below the Honeycomb logo in the left navigation menu to reveal the Environments list.

When working within Honeycomb Classic, a Classic label with a gray background appears.

- Select Manage Environments. The Environments summary appears.

- Select Create Environment in the top right corner. A modal appears.

-

Enter a name (required) for the environment. Optionally, enter a description for the Environment and choose a representative color from the dropdown.

Environment Names can not be renamed. Environments can be deleted.Once created, you can update an Environment’s color and description in Environment Settings.

- Select Create Environment and the new Environment will appear in the Environments summary.

- Select View API Keys in your new Environment’s row. The Environment’s API Keys appears in list form.

-

Use the Copy icon () to copy the API Key for use in your instrumentation.

An Environment-wide API Key must have

send eventsandcreate datasetspermissions to send events and create new datasets from traces.

Update Instrumentation Version

At this point of the migration, ensure each tracing instrumentation library is updated to use the minimum version, or ideally the latest version, that supports Environments and Services. We recommend updating to the latest version to enjoy a library’s full benefits and features. Reference the instrumentation version updates list from your migration preparation.Refinery

If you use Refinery, first complete the steps in the Refinery Migration section before continuing. Otherwise, proceed to the next step.Send Your Honeycomb Data to an Environment

In your instrumentation, update each Honeycomb API Keys with an API Key from your new Environment. Changing your API Keys causes data flow into your new Environment and the automatic creation of new datasets. Any reference to the Honeycomb API needs an updated API key.Trace data is linked to an Environment and is identified implicitly by the API key used.

For Service datasets, specifying a Dataset name is no longer required to submit trace data.

Any General dataset still requires a Dataset name to submit data.

General datasets includes logs and metrics and should be previously identified in your migration preparation.

- data appearing in the new Environment

- new Service datasets, based on

service.name - new General datasets, as named in the existing instrumentation

- Your Classic Environment no longer receiving data

Validate New Datasets in Environment

Reference the Dataset and Services lists from your migration preparation to ensure all expected Datasets are created. Use Service Map to determine if Services appear as expected in their new Environment.Recreate your Honeycomb Configurations in your Environment

Recreate the configuration for each Honeycomb feature using one of the following options:- The Honeycomb Classic to Environments & Services Migration Tool: Use our web-based GUI, which runs on your local machine and guides you through the process of mapping Honeycomb Classic configuration objects to Environments & Services, including:

- Triggers

- SLOs

- Boards

- Calculated Fields

- Saved Queries

- Columns

- The Honeycomb UI: Use our UI to manually recreate each feature or object. This path is recommended only if:

- you have fewer than 50 combined boards, triggers, and SLOs

- you wish to significantly refactor any or all of the above features

- Attributes, or fields

- Calculated Fields

- Query Annotations

- Boards

- Triggers

- SLOs

- Marker Configurations

- Dataset Definitions

After Migration

Clean up Configuration

After migration, review your Environment and configurations for any errors. For new Honeycomb configurations that are service-specific, remove any unnecessary service name references (service.name).

Conclude Classic Usage

If no longer needed, delete your Classic Environment using Delete Environments in Environment Settings. You must be a team owner to delete an environment. You may want to keep your Classic Environment if:- Your Classic permalinks are still relevant

- You still have data in Classic that you want to reference

Classic data will age out based on your retention period.

We encourage you to delete your Classic Environment once finished with it.

Questions and Support

Join the #discuss-hny-classic channel in our Pollinators Community Slack to ask questions and learn more. For Pro and Enterprise users, contact Honeycomb Support or email support@honeycomb.io.Refinery Migration Guide

To migrate Refinery from Classic to Environments:- Update Refinery instrumentation to a version that supports Environments

- Update Refinery Sampling Rules configuration in

rules.tomlto support Environments as needed - Update Refinery General Configuration in

config.tomlto use the new Environment API Key(s) and Environment name(s) - [Optional] Add

EnvironmentCacheTTL, an optional Refinery configuration option, toconfig.toml

Update Refinery Instrumentation Version

Update your Refinery instrumentation to a version that supports Environments and Services. We recommend updating to the latest available version, but the minimum required version for Refinery is version1.12.0.

Refinery Sampling Rules Configuration

As needed, update your Refinery Sampling Rules inrules.toml to include Environment Sampling Rules.

Environment names are case-sensitive in your Refinery configuration.

DatasetPrefix in the config.toml configuration.

When Refinery receives telemetry using a Classic Dataset API key, it uses the DatasetPrefix to resolve rules using the format {prefix}.{dataset}.

-

Set the dataset prefix (

DatasetPrefix) inconfig.toml. For example: -

Update your Classic datasets in

rules.tomlwith theDatasetPrefixvalue. For example, these sampling rules define the Environment “Hello world”, the Environment “Production”, and a “production” dataset in Honeycomb Classic. Note that the “production” Honeycomb Classic Dataset is configured as[classic.production].

Refinery General Configuration

With Environments, Refinery now supports the ability to determine if an API key is a Classic Dataset key (32 characters) or an Environment Key (22/23 characters) when receiving telemetry data. For Classic Dataset keys, Refinery follows the pre-existing behavior and uses the Dataset present in the event to reference the sampler definition. For Environment keys, Refinery uses the API key to call to Honeycomb API and retrieve the Environment name, which is cached. Then, Refinery uses that value to look up the sampler definition. (Change how long Refinery caches the Environment name value withEnvironmentCacheTTL.)

Evaluate and update your Refinery’s General configuration with:

- the new Environment API Key(s) for

APIKeysif not accepting all API Keys DatasetPrefixif an Environment and a Classic Dataset use the same name- (optional)

EnvironmentCacheTTLif change is needed

EnvironmentCacheTTL

EnvironmentCacheTTL is a new optional Refinery configuration option that exists for Environments users. When given an Environment API key, Refinery looks up the associated environment through an HTTP call home to Honeycomb. TheEnvironmentCacheTTL configuration controls the amount of time that the retrieved environment name is cached.

The default is 1 hour (“1h”) and is not eligible for live reload.

To cache for a different length of time than the default 1 hour, set the EnvironmentCacheTTL in config.toml:

Refinery Migration Complete

At this point, your Refinery instance should have:- an instrumentation version that supports Environments

- Sampling Rules that contain Classic and Environment Sampling Rules as needed

- General Configuration that includes any updated configuration as needed